This is part 2 of a 4 part series of articles where I explain how I discovered and purchased my laptop by building a web application which scrapes a local PC parts forum and sends automated email alerts when posts featuring specific keywords appear:

- Part 1: Let Your Next Laptop Find YOU!

- Part 2: Django and Scrapy (this page)

- Part 3: Celery, Mailgun and Flower

- Part 4: Deploying and using CarbAlert

CarbAlert on GitHub: https://github.com/dinofizz/carbalert

Django

In order to manage the search phrases and email addresses I am using Django. Django is a Python web framework, and is known for including many extras right out of the box. I am taking advantage of two specific extras: Django’s built-in ORM and the Django admin console.

Django ORM

With the ORM and admin console it becomes easy to create search phrases which are related to specific users. I simply create Django Model classes representing a SearchPhrase and a Thread. There exists a many-to-many relationship between search phrases and threads: a thread may contain multiple search phrases, and a search phrase may be found in many threads.

from django.contrib.auth.models import User

from django.db import models

class SearchPhrase(models.Model):

phrase = models.CharField(max_length=100, blank=True)

email_users = models.ManyToManyField(User, related_name="email_users")

def __str__(self):

return self.phrase

class Thread(models.Model):

thread_id = models.CharField(max_length=20, blank=True)

title = models.CharField(max_length=200, blank=True)

url = models.CharField(max_length=200, blank=True)

text = models.TextField(max_length=1000)

datetime = models.DateTimeField(blank=True)

search_phrases = models.ManyToManyField(SearchPhrase)

def __str__(self):

return self.title

Link to code: https://github.com/dinofizz/carbalert/blob/master/carbalert/carbalert_django/models.py

Django & PostgreSQL

Django makes it easy to integrate with many different database types which can be configured in the Django settings.py configuration file. I decided to use PostgreSQL just to see and learn what would be involved. It was as easy as installing the psycopg2 package which provides a PostgreSQL database adapter for Python, and updating the default database configuration in the Django settings.py file to connect to a PostgreSQL database:

...

DATABASES = {

'default': {

'ENGINE': 'django.db.backends.postgresql',

'NAME': 'postgres',

'USER': 'postgres',

'HOST': 'db',

'PORT': 5432,

}

}

...

The “db” host will resolve to a named Docker container:

...

db:

image: postgres

volumes:

- postgres_data:/var/lib/postgresql/data/

...

Django Admin Console and Registration with Email Address

CarbAlert users are notified of search phrase “hits” via email. By default user registration through the Django admin console does not require an email address - only a username and password. To then associate an email address with a new user you need to perform an extra step after they have created their username and password. In order to eliminate this extra step I made a change to the Django admin management console to ensure that an “email address” is prompted for capture and required for user registration. My implementation is based on a StackOverflow answer, and can be found here:

from django.contrib import admin

from django.contrib.auth.admin import UserAdmin

from django.contrib.auth.forms import UserCreationForm, UserChangeForm

from django.contrib.auth.models import User

from .models import Thread, SearchPhrase

class EmailRequiredMixin(object):

def __init__(self, *args, **kwargs):

super(EmailRequiredMixin, self).__init__(*args, **kwargs)

self.fields["email"].required = True

class MyUserCreationForm(EmailRequiredMixin, UserCreationForm):

pass

class MyUserChangeForm(EmailRequiredMixin, UserChangeForm):

pass

class EmailRequiredUserAdmin(UserAdmin):

form = MyUserChangeForm

add_form = MyUserCreationForm

add_fieldsets = (

(

None,

{

"fields": ("username", "email", "password1", "password2"),

"classes": ("wide",),

},

),

)

admin.site.unregister(User)

admin.site.register(User, EmailRequiredUserAdmin)

admin.site.register(Thread)

admin.site.register(SearchPhrase)

Link to code: https://github.com/dinofizz/carbalert/blob/master/carbalert/carbalert_django/admin.py

The Django web app is run from a Docker container as described in the docker-compose.yml file:

...

web:

build: .

working_dir: /code/carbalert

command: gunicorn carbalert.wsgi -b 0.0.0.0:8000

volumes:

- "/static:/static"

ports:

- "8000:8000"

depends_on:

- db

...

Scrapy

In order to scan the latest Carbonite posts I am using Scrapy. Scrapy is a Python framework for scraping web sites. I had previously used BeautifulSoup to scrape web sites for HTML content-of-interest, but after listening to Episode #50: Web scraping at scale with Scrapy and ScrapingHub of the Talk Python To Me podcast I decided to give Scrapy a go.

Scrapy is able to scrape web sites just like BeautifulSoup, but then has additional features such as item pipelines, an interactive shell and logging, and more. Scraping logic pertinent to a specific site or web resource is contained within a class which extends one of the “Spider” base classes. These spiders provide built-in mechanisms for navigating and collecting data.

I did consider using the RSS feed provided by Carbonite for each forum, but the post data was truncated and did not contain the full text for me to search for keywords of interest.

I did look over the Carbonite Terms and Conditions and there is (currently) no mention of any restriction on scraping the site. After getting an alert I would still have to click through to the thread in question and use the internal messaging tool to negotiate the purchase.

Scrapy shell

Scrapy provides an interactive shell which can be used to inspect the results of a crawl. This is useful for exploring various HTML and CSS selectors when attempting to figure out the best way of identifying how to get to the data you need. If you start a Scrapy shell with a URL as a command line argument Scrapy will run a default Spider and scrape the URL. You will end up in the interactive shell and a listing of which objects are now available for inspection. Here is what it looks like if I run the shell with the Carbonite laptop forum URL:

| |

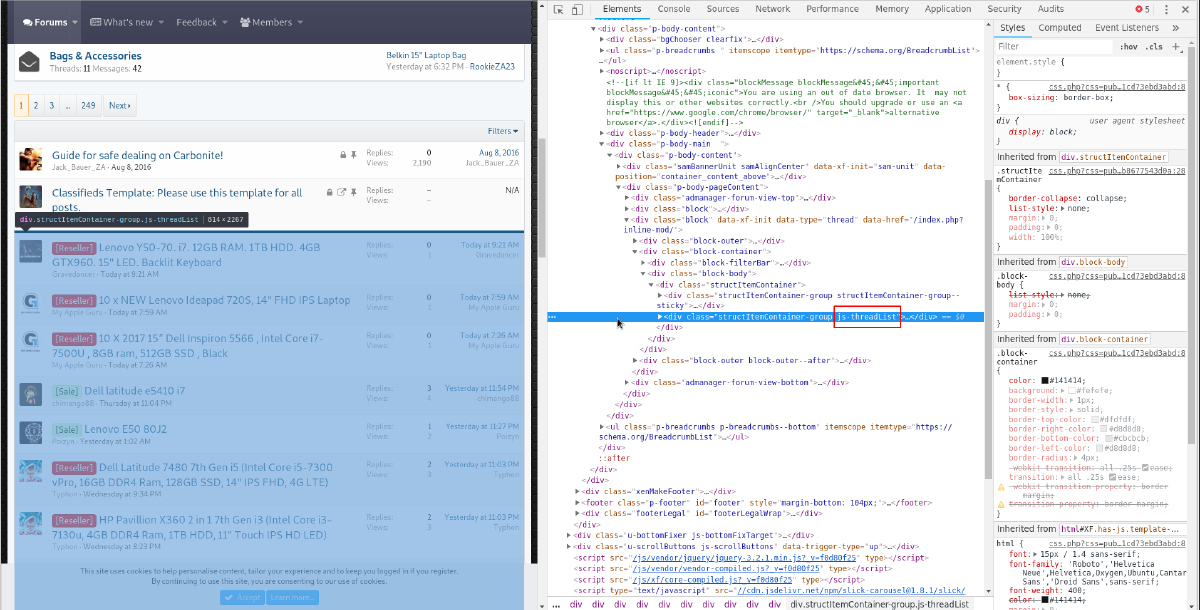

I knew I needed to be able to find all the posts available on the first page of the Carbonite Laptops forum. With Chrome dev tools I was able to determine that all of the threads are found under the a div identified by a .js-threadList CSS class selector:

I am also able to see that individual threads can be identified by another CSS identifier .structItem--thread. By chaining the Scrapy selection results I can create a list of all the individual threads, as seen by running the following commands in the Scrapy shell:

| |

In addition to using using CSS selectors, Scrapy can locate and extract HTML elements using XPath expressions. Here is an example where I continue with the list of threads and extract the text for the title from the first one in the list using a combination of CSS selectors and an XPath expression:

| |

CarbSpider

Using the results of my investigation from poking around with the Scrapy shell I decided to put all of the scraping logic into a Scrapy Spider. This Python class contains the code which knows how to scan the Carbonite “Laptop” forum index page which contains the 30 latest posts.

When a Spider is invoked it will use a URL specified in the start_urls variable to generate a Request object targeted to that URL. Once Scrapy has scraped the URL it will call-back into the parse function of the Spider with all the gathered information stored in a Response object which is passed in as a parameter:

import scrapy

class CarbSpider(scrapy.Spider):

name = "carb"

start_urls = [

'https://carbonite.co.za/index.php?forums/laptops.32/',

]

def parse(self, response):

# Insert response handling here

My “CarbSpider” is used obtain metadata such as thread title, thread URL and a unique thread ID. I also want to be able to search the body text of the initial thread post for keywords of interest. With Scrapy I can direct my Spider to scrape an additional URL (the actual thread post URL) with it’s own call-back function which will parse that response. Here is the full code of my CarbSpider which shows how I loop through all threads found on the Laptop forum index page and in turn scrapes each of those thread posts for additional data (initial post body text and timestamp):

import logging

import re

import html2text

import scrapy

class CarbSpider(scrapy.Spider):

name = "carb"

start_urls = ["https://carbonite.co.za/index.php?forums/laptops.32/"]

def parse(self, response):

logging.info("CarbSpider: Parsing response")

threads = response.css(".js-threadList").css(".structItem--thread")

logging.info(f"Found {len(threads)} threads.")

for thread in threads:

item = {}

thread_url_partial = (

thread.css(".structItem-cell--main")

.xpath(".//a")

.css("::attr(href)")[1]

.extract()

)

thread_url = response.urljoin(thread_url_partial)

logging.info(f"Thread URL: {thread_url}")

item["thread_url"] = thread_url

thread_title = (

thread.css(".structItem-cell--main")

.css(".structItem-title")

.xpath(".//a/text()")

.extract_first()

)

logging.info(f"Thread title: {thread_title}")

item["title"] = thread_title

thread_id = re.findall(r"\.(\d+)/", thread_url_partial)[0]

item["thread_id"] = thread_id

logging.info(f"Thread ID: {thread_id}")

request = scrapy.Request(item["thread_url"], callback=self.parse_thread)

request.meta["item"] = item

yield request

def parse_thread(self, response):

item = response.meta["item"]

thread_timestamp = (

response.css(".message-main")[0]

.xpath(".//time")

.css("::attr(datetime)")

.extract_first()

)

logging.info(f"Thread timestamp: {thread_timestamp}")

item["datetime"] = thread_timestamp

html = response.css(".message-main")[0].css(".bbWrapper").extract_first()

converter = html2text.HTML2Text()

converter.ignore_links = True

thread_text = converter.handle(html)

logging.info(f"Thread text: {thread_text}")

item["text"] = thread_text

yield item

Link to code: https://github.com/dinofizz/carbalert/blob/master/carbalert/carbalert_scrapy/carbalert_scrapy/spiders/carb_spider.py

As the thread post body content is formatted in HTML (it is of course being presented on a web page) I make use of the HTML2Text to convert the thread post body HTML to non-HTML formatted text.

So it may be a bit confusing to figure out what happens with the data I am collecting, but here is an attempt to explain:

Information from this Spider is populated into an item dictionary which is passed along for each thread found on the index page from parse() into parse_thread(). Once parse_thread() has completed collecting information on the specific forum post, it adds to the item object and is yielded back into parse(), whose final yield statement will pass on the item object to the Pipeline (more on this below).

(You may be better off following the documentation for Scrapy)

CarbPipeline

Thread metadata scraped from the URL and parsed by the CarbSpider is then passed into a Scrapy Item Pipeline for further processing. I created a CarbPipeline which performs the following, given the previously scraped thread item metadata:

- Given the scraped thread ID, checks to see if an existing Thread object exists in the database. If I have already saved this thread before I discard the

item. Writing this blog post I realise that newly added search phrases will now not trigger on previously saved threads which may be scraped again should a new post bring them to the front page. # TODO… - Checks if any of the search phrases are found in the thread title or thread body.

- If a search phrase is found, it is added to the “mailing list” dictionary where the user is the key and a list of all associated search phrase hits is the value. Each search phrase hit is appended to a new or existing list for a specific user - this allows a user to be notified of a single thread of interest which may contain multiple search phrases hits for that user.

- A Thread object containing all relevant metadata is saved to the database.

- Once the thread

itemhas been checked against all possible search phrases an asynchronous email task is kicked off which will send email notifications to all users which had search phrase hits for this thread - one email per user, with all search phrases hits listed in the email.

Code below:

# Define your item pipelines here

#

# Don't forget to add your pipeline to the ITEM_PIPELINES setting

# See: http://doc.scrapy.org/en/latest/topics/item-pipeline.html

import logging

import maya

# The path for this is weird. I spent some time trying to get it to work with the a more sane import statement

# but I was not (yet) successful.

from carbalert_django.models import Thread, SearchPhrase

from carbalert.carbalert_scrapy.carbalert_scrapy.tasks import send_email_notification

class CarbalertPipeline(object):

def process_item(self, item, spider):

logging.info("CarbalertPipeline: Processing item")

thread_id = item["thread_id"]

logging.info(f"Checking if thread ID ({thread_id}) exists in DB...")

if Thread.objects.filter(thread_id=thread_id).exists():

logging.debug("Thread already exists.")

return item

logging.info("No existing thread for ID.")

search_phrases = SearchPhrase.objects.values_list("phrase", flat=True)

title = item["title"]

text = item["text"]

thread_url = item["thread_url"]

thread_datetime = maya.parse(item["datetime"])

email_list = {}

for search_phrase in search_phrases:

logging.info(f"Scanning title and text for search phrase: {search_phrase}")

if (

search_phrase.lower() in title.lower()

or search_phrase.lower() in text.lower()

):

logging.info(f"Found search phrase: {search_phrase}")

search_phrase_object = SearchPhrase.objects.get(phrase=search_phrase)

for user in search_phrase_object.email_users.all():

logging.info(

f"Found user {user} associated to search phrase {search_phrase}"

)

if user in email_list:

email_list[user].append(search_phrase)

else:

email_list[user] = [search_phrase]

logging.info(f"Saving thread ID ({thread_id}) to DB.")

try:

thread = Thread.objects.get(thread_id=thread_id)

except Thread.DoesNotExist:

thread = Thread()

thread.thread_id = thread_id

thread.title = title

thread.text = text

thread.url = thread_url

thread.datetime = thread_datetime.datetime()

thread.save()

thread.search_phrases.add(search_phrase_object)

thread.save()

local_datetime = thread_datetime.datetime(to_timezone="Africa/Harare").strftime(

"%d-%m-%Y %H:%M"

)

for user in email_list:

logging.info(

f"Sending email notification to user {user} for thread ID {thread_id}, thread title: {title}"

)

send_email_notification.delay(

user.email, email_list[user], title, text, thread_url, local_datetime

)

return item

Link to code: https://github.com/dinofizz/carbalert/blob/master/carbalert/carbalert_scrapy/carbalert_scrapy/pipelines.py

Next post in series: Part 3: Celery, Mailgun and Flower